How brain research breakthrough could spark next generation of hearing devices

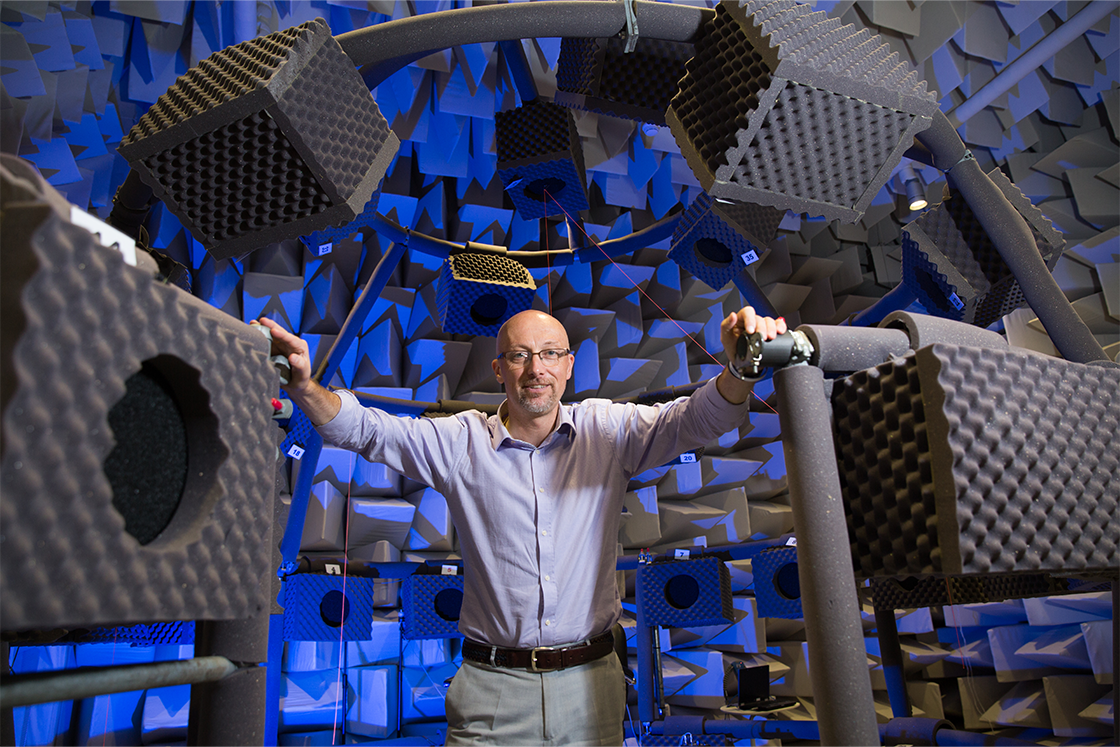

New brain research has busted a 75 year-old theory about how humans hear. Distinguished Professor David McAlpine explains how the findings could lead to better voice recognition technology as well as more advanced hearing devices.

First published on Macquarie University

New brain research has busted a 75 year-old theory about how humans hear. Distinguished Professor David McAlpine explains how the findings could lead to better voice recognition technology as well as more advanced hearing devices.

A new Macquarie University study has advanced knowledge on how humans determine where sounds are coming from, and it could unlock the secret to creating a new generation of more adaptable and efficient hearing devices ranging from hearing aids to smartphones.

The holy grail for audio technologies like hearing aids and implants is to mimic human hearing, including accurately locating the source of sounds, but the grail remains elusive.

The current approach to solving this problem is based on a model developed by engineers in the 1940s to explain how humans locate a sound source based on differences of just a few tens of millionths of a second in when the sound reaches each ear.

The model uses the theory that each location in space is represented by a dedicated neuron in the human brain, the only function of which is to determine where a sound is coming from.

Its assumptions have been guiding and influencing research – and audio technologies – ever since.

The only problem is that there are flaws in this engineering approach.

A new research paper in international journal Current Biology by Macquarie University Hearing researchers, Dr Jaime Undurraga, Dr Robert Luke, Dr Lindsey Van Yper, Dr Jessica Monaghan, and Distinguished Professor David McAlpine, has finally revealed what is really going on.

And it seems that, in this regard at least, we are not as different from small mammals like gerbils and guinea pigs as we might think.

A dedicated network?

The paper’s senior author, Professor McAlpine, first created ripples in the hearing research pond nearly 25 years ago by challenging the engineering model in a paper published in Nature Neuroscience.

His theory was strongly opposed at the time by the old guard, but he continued to gather evidence to support it. He was able to show the old model did not apply to one species after another, even the barn owl, which has always been the poster animal for spatial listening.

Proving it in humans however, remained difficult, because it was so much harder to show this process in action in the human brain.

Professor McAlpine and his team have now been able to show that far from having a dedicated array of neurons with each tuned solely to one point in space, our brains are instead processing sounds in the same simplified way as many other mammals.

“We like to think that our brains must be far more advanced than other animals in every way, but that is just hubris,” he says.

“It was clear to me that this was a function that didn’t require an over-engineered brain because animals come in all shapes and sizes.

“It was always going to be the case that humans would use a similar neural system to other animals for spatial listening, one that had evolved to be energy-efficient and adaptable.”

The key to proving this lay in developing a hearing assessment that asked study participants to determine whether the sounds they heard were focussed (like foreground sounds) or fuzzy (more like background noise).

Participants also underwent electro-and magneto-encephalography (EEG and MEG) imaging while listening to the same sounds.

This shows us that a machine doesn’t have to be trained for language like a human brain to be able to listen effectively.

The imaging revealed patterns that were the same as those in smaller mammals and were explained by a multifunctional network of neurons that encoded information, including the source’s location and size.

And when they scaled the distribution of these location detectors for head size, it was also remarkably like that of rhesus monkeys, which have relatively large heads and similar cortices to humans.

“That was the final check box, and it tells us that primates that have directional hearing are using the same simplified neuronal system as small mammals,” Professor McAlpine says.

“Gerbils are like guinea pigs, guinea pigs are like rhesus monkeys, and rhesus monkeys are like humans in this regard.

“A sparse, energy efficient form of neural circuitry is performing this function – our gerbil brain, if you like.”

Thinking simpler to build better devices

These findings are significant not only for debunking an old theory, but also for the future design of listening machines.

They also suggest that our brains are using the same network to distinguish where sounds are coming from as they use to pick speech out of background noise.

This is important because in noisy environments, it becomes difficult even for people with healthy hearing to isolate a single sound source. For people with hearing problems, the challenge becomes insurmountable.

This ‘cocktail party problem’ is also a significant limiting factor for devices that use machine listening, like hearing aids, cochlear implants, and smartphones.

While these systems work relatively well in quiet spaces with one or two speakers, they all struggle to isolate a single voice from many, or to hear if there is a high level of reverberation.

This means that sound becomes a confusing blanket of noise, forcing users of hearing devices to concentrate much harder to distinguish individual sounds.

In smartphones, it translates as the inability to distinguish spoken instructions both in loud places like train platforms, and in relatively quiet spaces like kitchens where tiles and other hard objects can generate lots of reverberation.

Professor McAlpine says this is because current machine listening works on faithfully recreating a high-fidelity signal, not on how the brain has evolved to translate that signal.

This has repercussions for new technologies like large language models (LLMs), which are AIs that can understand and generate human language, and are increasingly used in listening devices.

“LLMs are brilliant at predicting the next word in a sentence, but they’re trying to do too much,” Professor McAlpine says.

“Being able to locate the source of a sound is the important thing here, and to do that, we don’t need a ‘deep mind’ language brain. Other animals can do it, and they don’t have language.

“Our brains don’t keep tracking a sound the whole time, which the large language processors are trying to do.

“Just like other animals, we are using our ‘shallow brain’ to pick out very small snippets of sound, including speech, and use these snippets to tag the location and maybe even the identity of the source.

“We don’t have to reconstruct a high-fidelity signal to do this, but instead we need to understand how our brain represents that signal neurally, well before it reaches a language centre in the cortex.

“This shows us that a machine doesn’t have to be trained for language like a human brain to be able to listen effectively.”

The next step for the team is to identify the minimum amount of information that can be conveyed in a sound but still get the maximum amount of spatial listening.

In 2022, Macquarie University Hearing entered into a partnership with Google Australia, Cochlear, National Acoustic Laboratories, NextSense, and the Shepherd Centre to explore opportunities for artificial intelligence in hearing.